Upload 63 files

Browse filesAdded Inference code, demo data and config and slum script

This view is limited to 50 files because it contains too many changes.

See raw diff

- .gitattributes +13 -0

- NoMAISI_logo.png +3 -0

- configs/config_maisi3d-rflow.json +150 -0

- configs/infr_config_NoMAISI_controlnet.json +17 -0

- configs/infr_env_NoMAISI_DLCSD24_demo.json +11 -0

- data/DLCS_1419_seg_sh.nii.gz +3 -0

- data/infr_NoMAISI_DLCSD24_demo_512xy_256z_771p25m_dataset.json +32 -0

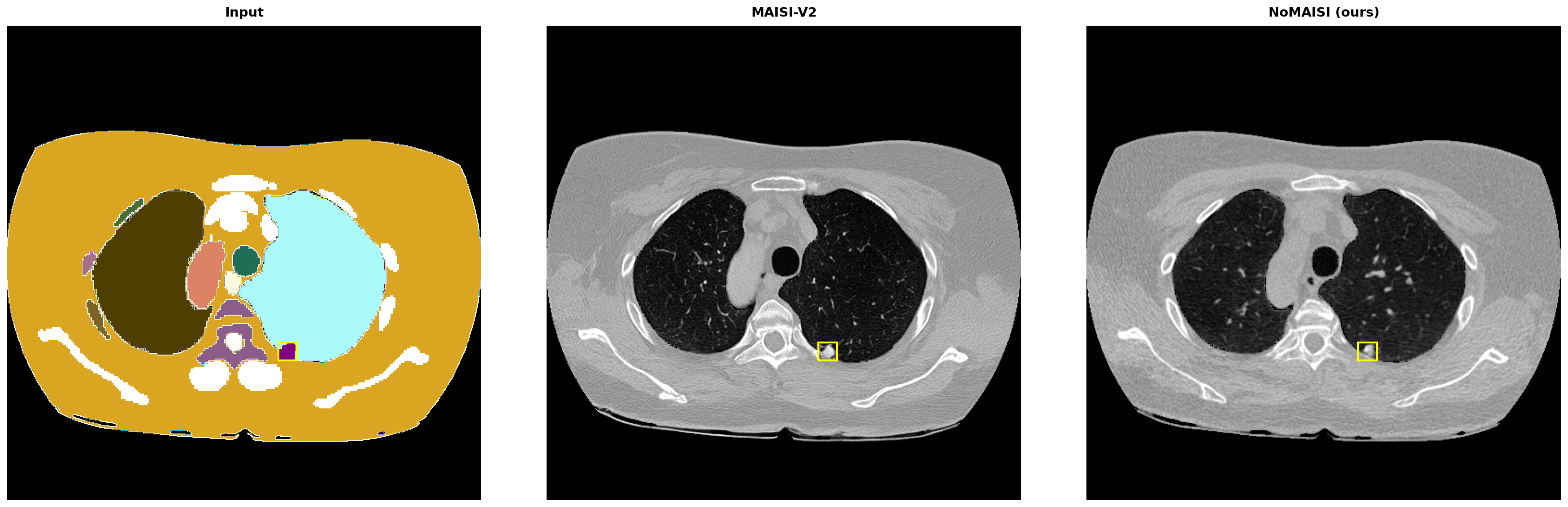

- doc/images/DLCS_1419_ann0_slice134_triple.png +3 -0

- doc/images/DLCS_1419_ann1_slice204_triple.png +3 -0

- doc/images/DLCS_1443_ann1_slice125_triple.png +3 -0

- doc/images/DLCS_1446_ann0_slice122_triple.png +3 -0

- doc/images/DLCS_1447_ann0_slice206_triple.png +3 -0

- doc/images/DLCS_1453_ann0_slice204_triple.png +3 -0

- doc/images/DLCS_1508_ann0_slice46_triple.png +3 -0

- doc/images/DLCS_1519_ann3_slice155_triple.png +3 -0

- doc/images/GanAI_fid_scatter_marker_legend.png +3 -0

- doc/images/NoMAISI_train_and_infer.png +3 -0

- doc/images/TaskCls.png +3 -0

- doc/images/workflow.png +3 -0

- inference.sub +26 -0

- logs/NoMAISI-infr-log-38612.out +18 -0

- scripts/__init__.py +10 -0

- scripts/__pycache__/__init__.cpython-310.pyc +0 -0

- scripts/__pycache__/augmentation.cpython-310.pyc +0 -0

- scripts/__pycache__/diff_model_create_training_data.cpython-310.pyc +0 -0

- scripts/__pycache__/diff_model_setting.cpython-310.pyc +0 -0

- scripts/__pycache__/find_masks.cpython-310.pyc +0 -0

- scripts/__pycache__/infer_controlnet.cpython-310.pyc +0 -0

- scripts/__pycache__/infer_testV2_controlnet.cpython-310.pyc +0 -0

- scripts/__pycache__/infer_test_controlnet.cpython-310.pyc +0 -0

- scripts/__pycache__/inference.cpython-310.pyc +0 -0

- scripts/__pycache__/quality_check.cpython-310.pyc +0 -0

- scripts/__pycache__/rectified_flow.cpython-310.pyc +0 -0

- scripts/__pycache__/sample.cpython-310.pyc +0 -0

- scripts/__pycache__/train_controlnet.cpython-310.pyc +0 -0

- scripts/__pycache__/utils.cpython-310.pyc +0 -0

- scripts/__pycache__/utils_plot.cpython-310.pyc +0 -0

- scripts/augmentation.py +373 -0

- scripts/compute_fid_2-5d_ct.py +747 -0

- scripts/diff_model_create_training_data.py +231 -0

- scripts/diff_model_infer.py +358 -0

- scripts/diff_model_setting.py +92 -0

- scripts/diff_model_train.py +499 -0

- scripts/find_masks.py +157 -0

- scripts/infer_controlnet.py +222 -0

- scripts/infer_testV2_controlnet.py +220 -0

- scripts/infer_test_controlnet.py +220 -0

- scripts/inference.py +299 -0

- scripts/quality_check.py +149 -0

- scripts/rectified_flow.py +322 -0

.gitattributes

CHANGED

|

@@ -33,3 +33,16 @@ saved_model/**/* filter=lfs diff=lfs merge=lfs -text

|

|

| 33 |

*.zip filter=lfs diff=lfs merge=lfs -text

|

| 34 |

*.zst filter=lfs diff=lfs merge=lfs -text

|

| 35 |

*tfevents* filter=lfs diff=lfs merge=lfs -text

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

| 33 |

*.zip filter=lfs diff=lfs merge=lfs -text

|

| 34 |

*.zst filter=lfs diff=lfs merge=lfs -text

|

| 35 |

*tfevents* filter=lfs diff=lfs merge=lfs -text

|

| 36 |

+

doc/images/DLCS_1419_ann0_slice134_triple.png filter=lfs diff=lfs merge=lfs -text

|

| 37 |

+

doc/images/DLCS_1419_ann1_slice204_triple.png filter=lfs diff=lfs merge=lfs -text

|

| 38 |

+

doc/images/DLCS_1443_ann1_slice125_triple.png filter=lfs diff=lfs merge=lfs -text

|

| 39 |

+

doc/images/DLCS_1446_ann0_slice122_triple.png filter=lfs diff=lfs merge=lfs -text

|

| 40 |

+

doc/images/DLCS_1447_ann0_slice206_triple.png filter=lfs diff=lfs merge=lfs -text

|

| 41 |

+

doc/images/DLCS_1453_ann0_slice204_triple.png filter=lfs diff=lfs merge=lfs -text

|

| 42 |

+

doc/images/DLCS_1508_ann0_slice46_triple.png filter=lfs diff=lfs merge=lfs -text

|

| 43 |

+

doc/images/DLCS_1519_ann3_slice155_triple.png filter=lfs diff=lfs merge=lfs -text

|

| 44 |

+

doc/images/GanAI_fid_scatter_marker_legend.png filter=lfs diff=lfs merge=lfs -text

|

| 45 |

+

doc/images/NoMAISI_train_and_infer.png filter=lfs diff=lfs merge=lfs -text

|

| 46 |

+

doc/images/TaskCls.png filter=lfs diff=lfs merge=lfs -text

|

| 47 |

+

doc/images/workflow.png filter=lfs diff=lfs merge=lfs -text

|

| 48 |

+

NoMAISI_logo.png filter=lfs diff=lfs merge=lfs -text

|

NoMAISI_logo.png

ADDED

|

Git LFS Details

|

configs/config_maisi3d-rflow.json

ADDED

|

@@ -0,0 +1,150 @@

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

| 1 |

+

{

|

| 2 |

+

"spatial_dims": 3,

|

| 3 |

+

"image_channels": 1,

|

| 4 |

+

"latent_channels": 4,

|

| 5 |

+

"include_body_region": false,

|

| 6 |

+

"mask_generation_latent_shape": [

|

| 7 |

+

4,

|

| 8 |

+

64,

|

| 9 |

+

64,

|

| 10 |

+

64

|

| 11 |

+

],

|

| 12 |

+

"autoencoder_def": {

|

| 13 |

+

"_target_": "monai.apps.generation.maisi.networks.autoencoderkl_maisi.AutoencoderKlMaisi",

|

| 14 |

+

"spatial_dims": "@spatial_dims",

|

| 15 |

+

"in_channels": "@image_channels",

|

| 16 |

+

"out_channels": "@image_channels",

|

| 17 |

+

"latent_channels": "@latent_channels",

|

| 18 |

+

"num_channels": [

|

| 19 |

+

64,

|

| 20 |

+

128,

|

| 21 |

+

256

|

| 22 |

+

],

|

| 23 |

+

"num_res_blocks": [2,2,2],

|

| 24 |

+

"norm_num_groups": 32,

|

| 25 |

+

"norm_eps": 1e-06,

|

| 26 |

+

"attention_levels": [

|

| 27 |

+

false,

|

| 28 |

+

false,

|

| 29 |

+

false

|

| 30 |

+

],

|

| 31 |

+

"with_encoder_nonlocal_attn": false,

|

| 32 |

+

"with_decoder_nonlocal_attn": false,

|

| 33 |

+

"use_checkpointing": false,

|

| 34 |

+

"use_convtranspose": false,

|

| 35 |

+

"norm_float16": true,

|

| 36 |

+

"num_splits": 4,

|

| 37 |

+

"dim_split": 1

|

| 38 |

+

},

|

| 39 |

+

"diffusion_unet_def": {

|

| 40 |

+

"_target_": "monai.apps.generation.maisi.networks.diffusion_model_unet_maisi.DiffusionModelUNetMaisi",

|

| 41 |

+

"spatial_dims": "@spatial_dims",

|

| 42 |

+

"in_channels": "@latent_channels",

|

| 43 |

+

"out_channels": "@latent_channels",

|

| 44 |

+

"num_channels": [64, 128, 256, 512],

|

| 45 |

+

"attention_levels": [

|

| 46 |

+

false,

|

| 47 |

+

false,

|

| 48 |

+

true,

|

| 49 |

+

true

|

| 50 |

+

],

|

| 51 |

+

"num_head_channels": [

|

| 52 |

+

0,

|

| 53 |

+

0,

|

| 54 |

+

32,

|

| 55 |

+

32

|

| 56 |

+

],

|

| 57 |

+

"num_res_blocks": 2,

|

| 58 |

+

"use_flash_attention": true,

|

| 59 |

+

"include_top_region_index_input": "@include_body_region",

|

| 60 |

+

"include_bottom_region_index_input": "@include_body_region",

|

| 61 |

+

"include_spacing_input": true,

|

| 62 |

+

"num_class_embeds": 128,

|

| 63 |

+

"resblock_updown": true,

|

| 64 |

+

"include_fc": true

|

| 65 |

+

},

|

| 66 |

+

"controlnet_def": {

|

| 67 |

+

"_target_": "monai.apps.generation.maisi.networks.controlnet_maisi.ControlNetMaisi",

|

| 68 |

+

"spatial_dims": "@spatial_dims",

|

| 69 |

+

"in_channels": "@latent_channels",

|

| 70 |

+

"num_channels": [64, 128, 256, 512],

|

| 71 |

+

"attention_levels": [

|

| 72 |

+

false,

|

| 73 |

+

false,

|

| 74 |

+

true,

|

| 75 |

+

true

|

| 76 |

+

],

|

| 77 |

+

"num_head_channels": [

|

| 78 |

+

0,

|

| 79 |

+

0,

|

| 80 |

+

32,

|

| 81 |

+

32

|

| 82 |

+

],

|

| 83 |

+

"num_res_blocks": 2,

|

| 84 |

+

"use_flash_attention": true,

|

| 85 |

+

"conditioning_embedding_in_channels": 8,

|

| 86 |

+

"conditioning_embedding_num_channels": [8, 32, 64],

|

| 87 |

+

"num_class_embeds": 128,

|

| 88 |

+

"resblock_updown": true,

|

| 89 |

+

"include_fc": true

|

| 90 |

+

},

|

| 91 |

+

"mask_generation_autoencoder_def": {

|

| 92 |

+

"_target_": "monai.apps.generation.maisi.networks.autoencoderkl_maisi.AutoencoderKlMaisi",

|

| 93 |

+

"spatial_dims": "@spatial_dims",

|

| 94 |

+

"in_channels": 8,

|

| 95 |

+

"out_channels": 125,

|

| 96 |

+

"latent_channels": "@latent_channels",

|

| 97 |

+

"num_channels": [

|

| 98 |

+

32,

|

| 99 |

+

64,

|

| 100 |

+

128

|

| 101 |

+

],

|

| 102 |

+

"num_res_blocks": [1, 2, 2],

|

| 103 |

+

"norm_num_groups": 32,

|

| 104 |

+

"norm_eps": 1e-06,

|

| 105 |

+

"attention_levels": [

|

| 106 |

+

false,

|

| 107 |

+

false,

|

| 108 |

+

false

|

| 109 |

+

],

|

| 110 |

+

"with_encoder_nonlocal_attn": false,

|

| 111 |

+

"with_decoder_nonlocal_attn": false,

|

| 112 |

+

"use_flash_attention": false,

|

| 113 |

+

"use_checkpointing": true,

|

| 114 |

+

"use_convtranspose": true,

|

| 115 |

+

"norm_float16": true,

|

| 116 |

+

"num_splits": 8,

|

| 117 |

+

"dim_split": 1

|

| 118 |

+

},

|

| 119 |

+

"mask_generation_diffusion_def": {

|

| 120 |

+

"_target_": "monai.networks.nets.diffusion_model_unet.DiffusionModelUNet",

|

| 121 |

+

"spatial_dims": "@spatial_dims",

|

| 122 |

+

"in_channels": "@latent_channels",

|

| 123 |

+

"out_channels": "@latent_channels",

|

| 124 |

+

"channels":[64, 128, 256, 512],

|

| 125 |

+

"attention_levels":[false, false, true, true],

|

| 126 |

+

"num_head_channels":[0, 0, 32, 32],

|

| 127 |

+

"num_res_blocks": 2,

|

| 128 |

+

"use_flash_attention": true,

|

| 129 |

+

"with_conditioning": true,

|

| 130 |

+

"upcast_attention": true,

|

| 131 |

+

"cross_attention_dim": 10

|

| 132 |

+

},

|

| 133 |

+

"mask_generation_scale_factor": 1.0055984258651733,

|

| 134 |

+

"noise_scheduler": {

|

| 135 |

+

"_target_": "monai.networks.schedulers.rectified_flow.RFlowScheduler",

|

| 136 |

+

"num_train_timesteps": 1000,

|

| 137 |

+

"use_discrete_timesteps": false,

|

| 138 |

+

"use_timestep_transform": true,

|

| 139 |

+

"sample_method": "uniform",

|

| 140 |

+

"scale":1.4

|

| 141 |

+

},

|

| 142 |

+

"mask_generation_noise_scheduler": {

|

| 143 |

+

"_target_": "monai.networks.schedulers.ddpm.DDPMScheduler",

|

| 144 |

+

"num_train_timesteps": 1000,

|

| 145 |

+

"beta_start": 0.0015,

|

| 146 |

+

"beta_end": 0.0195,

|

| 147 |

+

"schedule": "scaled_linear_beta",

|

| 148 |

+

"clip_sample": false

|

| 149 |

+

}

|

| 150 |

+

}

|

configs/infr_config_NoMAISI_controlnet.json

ADDED

|

@@ -0,0 +1,17 @@

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

| 1 |

+

{

|

| 2 |

+

"controlnet_train": {

|

| 3 |

+

"batch_size": 2,

|

| 4 |

+

"cache_rate": 0.0,

|

| 5 |

+

"fold": 1,

|

| 6 |

+

"lr": 1e-5,

|

| 7 |

+

"n_epochs": 500,

|

| 8 |

+

"weighted_loss_label": [23],

|

| 9 |

+

"weighted_loss": 100

|

| 10 |

+

},

|

| 11 |

+

"controlnet_infer": {

|

| 12 |

+

"num_inference_steps": 30,

|

| 13 |

+

"autoencoder_sliding_window_infer_size": [80, 80, 64],

|

| 14 |

+

"autoencoder_sliding_window_infer_overlap": 0.25,

|

| 15 |

+

"modality": 1

|

| 16 |

+

}

|

| 17 |

+

}

|

configs/infr_env_NoMAISI_DLCSD24_demo.json

ADDED

|

@@ -0,0 +1,11 @@

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

| 1 |

+

{

|

| 2 |

+

"model_dir": "./models/",

|

| 3 |

+

"output_dir": "./outputs/NoMAISI_DLCSD24_demo_512xy_256z_771p25m",

|

| 4 |

+

"tfevent_path": "./outputs/tfevent",

|

| 5 |

+

"trained_autoencoder_path": "./models/autoencoder.pt",

|

| 6 |

+

"trained_diffusion_path": "./models/diffusion_unet.pt",

|

| 7 |

+

"trained_controlnet_path": "./models/Experiments_NoMAISI_512xy_256z_771p25m_finetune_500epoch_best.pt",

|

| 8 |

+

"exp_name": "NoMAISI_DLCSD24_demo_512xy_256z_771p25m",

|

| 9 |

+

"data_base_dir": ["/home/ft42/NoMAISI/data"],

|

| 10 |

+

"json_data_list": ["/home/ft42/NoMAISI/data/infr_NoMAISI_DLCSD24_demo_512xy_256z_771p25m_dataset.json"]

|

| 11 |

+

}

|

data/DLCS_1419_seg_sh.nii.gz

ADDED

|

@@ -0,0 +1,3 @@

|

|

|

|

|

|

|

|

|

|

|

|

|

| 1 |

+

version https://git-lfs.github.com/spec/v1

|

| 2 |

+

oid sha256:83da8dbf3b165023f3ffcec571fe5766177b65aabfa143f3a0bef5be41af757b

|

| 3 |

+

size 2265286

|

data/infr_NoMAISI_DLCSD24_demo_512xy_256z_771p25m_dataset.json

ADDED

|

@@ -0,0 +1,32 @@

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

| 1 |

+

{

|

| 2 |

+

"name": "NoMAISI_DLCSD24_demo_512xy_256z_771p25m",

|

| 3 |

+

"numTest": 1,

|

| 4 |

+

"testing": [

|

| 5 |

+

{

|

| 6 |

+

|

| 7 |

+

"label": "DLCS_1419_seg_sh.nii.gz",

|

| 8 |

+

"fold": 0,

|

| 9 |

+

"dim": [

|

| 10 |

+

512,

|

| 11 |

+

512,

|

| 12 |

+

256

|

| 13 |

+

],

|

| 14 |

+

"spacing": [

|

| 15 |

+

0.703125,

|

| 16 |

+

0.703125,

|

| 17 |

+

1.25

|

| 18 |

+

],

|

| 19 |

+

"top_region_index": [

|

| 20 |

+

0,

|

| 21 |

+

1,

|

| 22 |

+

0,

|

| 23 |

+

0

|

| 24 |

+

],

|

| 25 |

+

"bottom_region_index": [

|

| 26 |

+

0,

|

| 27 |

+

0,

|

| 28 |

+

1,

|

| 29 |

+

0

|

| 30 |

+

]

|

| 31 |

+

}]

|

| 32 |

+

}

|

doc/images/DLCS_1419_ann0_slice134_triple.png

ADDED

|

Git LFS Details

|

doc/images/DLCS_1419_ann1_slice204_triple.png

ADDED

|

Git LFS Details

|

doc/images/DLCS_1443_ann1_slice125_triple.png

ADDED

|

Git LFS Details

|

doc/images/DLCS_1446_ann0_slice122_triple.png

ADDED

|

Git LFS Details

|

doc/images/DLCS_1447_ann0_slice206_triple.png

ADDED

|

Git LFS Details

|

doc/images/DLCS_1453_ann0_slice204_triple.png

ADDED

|

Git LFS Details

|

doc/images/DLCS_1508_ann0_slice46_triple.png

ADDED

|

Git LFS Details

|

doc/images/DLCS_1519_ann3_slice155_triple.png

ADDED

|

Git LFS Details

|

doc/images/GanAI_fid_scatter_marker_legend.png

ADDED

|

Git LFS Details

|

doc/images/NoMAISI_train_and_infer.png

ADDED

|

Git LFS Details

|

doc/images/TaskCls.png

ADDED

|

Git LFS Details

|

doc/images/workflow.png

ADDED

|

Git LFS Details

|

inference.sub

ADDED

|

@@ -0,0 +1,26 @@

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

| 1 |

+

#!/bin/bash

|

| 2 |

+

|

| 3 |

+

#SBATCH --job-name=nomaisi

|

| 4 |

+

#SBATCH --mail-type=END,FAIL

|

| 5 |

+

#SBATCH --mail-user=ft42@duke.edu

|

| 6 |

+

#SBATCH -p vram48

|

| 7 |

+

#SBATCH --ntasks=1 #

|

| 8 |

+

#SBATCH --gpus=1 # 2 GPU per task, chose more if model is capable of multi gpu training

|

| 9 |

+

#SBATCH --cpus-per-task=16 # More if it is CPU intensive job too NNUNET demands lot of CPU

|

| 10 |

+

|

| 11 |

+

## Make sure logs directory is present on current directory (same as this script)

|

| 12 |

+

#SBATCH --output=logs/NoMAISI-infr-log-%j.out

|

| 13 |

+

#SBATCH --error=logs/NoMAISI-infr-log-%j.out

|

| 14 |

+

|

| 15 |

+

|

| 16 |

+

|

| 17 |

+

echo "Job starting"

|

| 18 |

+

echo "GPUs Given: $CUDA_VISIBLE_DEVICES"

|

| 19 |

+

module load miniconda/py39_4.12.0

|

| 20 |

+

source activate monai-auto3dseg

|

| 21 |

+

|

| 22 |

+

|

| 23 |

+

# Add the correct path to PYTHONPATH

|

| 24 |

+

export MONAI_DATA_DIRECTORY=/home/ft42/NoMAISI/

|

| 25 |

+

|

| 26 |

+

python -m scripts.infer_testV2_controlnet -c ./configs/config_maisi3d-rflow.json -e ./configs/infr_env_NoMAISI_DLCSD24_demo.json -t ./configs/infr_config_NoMAISI_controlnet.json

|

logs/NoMAISI-infr-log-38612.out

ADDED

|

@@ -0,0 +1,18 @@

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

| 0 |

0%| | 0/30 [00:00<?, ?it/s]

|

| 1 |

3%|▎ | 1/30 [00:00<00:23, 1.22it/s]

|

| 2 |

7%|▋ | 2/30 [00:01<00:14, 1.93it/s]

|

| 3 |

10%|█ | 3/30 [00:01<00:12, 2.17it/s]

|

| 4 |

13%|█▎ | 4/30 [00:01<00:11, 2.30it/s]

|

| 5 |

17%|█▋ | 5/30 [00:02<00:10, 2.39it/s]

|

| 6 |

20%|██ | 6/30 [00:02<00:09, 2.44it/s]

|

| 7 |

23%|██▎ | 7/30 [00:03<00:09, 2.47it/s]

|

| 8 |

27%|██▋ | 8/30 [00:03<00:08, 2.49it/s]

|

| 9 |

30%|███ | 9/30 [00:03<00:08, 2.51it/s]

|

| 10 |

33%|███▎ | 10/30 [00:04<00:07, 2.52it/s]

|

| 11 |

37%|███▋ | 11/30 [00:04<00:07, 2.53it/s]

|

| 12 |

40%|████ | 12/30 [00:05<00:07, 2.53it/s]

|

| 13 |

43%|████▎ | 13/30 [00:05<00:06, 2.53it/s]

|

| 14 |

47%|████▋ | 14/30 [00:05<00:06, 2.54it/s]

|

| 15 |

50%|█████ | 15/30 [00:06<00:05, 2.54it/s]

|

| 16 |

53%|█████▎ | 16/30 [00:06<00:05, 2.54it/s]

|

| 17 |

57%|█████▋ | 17/30 [00:07<00:05, 2.54it/s]

|

| 18 |

60%|██████ | 18/30 [00:07<00:04, 2.54it/s]

|

| 19 |

63%|██████▎ | 19/30 [00:07<00:04, 2.54it/s]

|

| 20 |

67%|██████▋ | 20/30 [00:08<00:03, 2.54it/s]

|

| 21 |

70%|███████ | 21/30 [00:08<00:03, 2.54it/s]

|

| 22 |

73%|███████▎ | 22/30 [00:08<00:03, 2.54it/s]

|

| 23 |

77%|███████▋ | 23/30 [00:09<00:02, 2.53it/s]

|

| 24 |

80%|████████ | 24/30 [00:09<00:02, 2.54it/s]

|

| 25 |

83%|████████▎ | 25/30 [00:10<00:01, 2.53it/s]

|

| 26 |

87%|████████▋ | 26/30 [00:10<00:01, 2.53it/s]

|

| 27 |

90%|█████████ | 27/30 [00:10<00:01, 2.53it/s]

|

| 28 |

93%|█████████▎| 28/30 [00:11<00:00, 2.53it/s]

|

| 29 |

97%|█████████▋| 29/30 [00:11<00:00, 2.53it/s]

|

|

|

|

|

|

|

|

|

|

| 30 |

0%| | 0/4 [00:00<?, ?it/s]

|

| 31 |

25%|██▌ | 1/4 [00:04<00:13, 4.36s/it]

|

| 32 |

50%|█████ | 2/4 [00:08<00:07, 4.00s/it]

|

| 33 |

75%|███████▌ | 3/4 [00:11<00:03, 3.79s/it]

|

|

|

|

|

|

|

|

|

|

|

|

| 1 |

+

Job starting

|

| 2 |

+

GPUs Given: 0

|

| 3 |

+

[2025-09-24 13:42:58.511][ INFO](maisi.controlnet.infer) - Number of GPUs: 1

|

| 4 |

+

[2025-09-24 13:42:58.512][ INFO](maisi.controlnet.infer) - World_size: 1

|

| 5 |

+

[2025-09-24 13:42:59.541][ INFO](maisi.controlnet.infer) - Load trained diffusion model from ./models/autoencoder.pt.

|

| 6 |

+

[2025-09-24 13:43:03.285][ INFO](maisi.controlnet.infer) - Load trained diffusion model from ./models/diffusion_unet.pt.

|

| 7 |

+

[2025-09-24 13:43:03.287][ INFO](maisi.controlnet.infer) - loaded scale_factor from diffusion model ckpt -> 1.0311251878738403.

|

| 8 |

+

2025-09-24 13:43:03,824 - INFO - 'dst' model updated: 180 of 231 variables.

|

| 9 |

+

[2025-09-24 13:43:04.077][ INFO](maisi.controlnet.infer) - load trained controlnet model from ./models/Experiments_NoMAISI_512xy_256z_771p25m_finetune_500epoch_best.pt

|

| 10 |

+

[2025-09-24 13:43:07.130][ INFO](root) - `controllable_anatomy_size` is not provided.

|

| 11 |

+

[2025-09-24 13:43:07.133][ INFO](root) - ---- Start generating latent features... ----

|

| 12 |

+

|

| 13 |

0%| | 0/30 [00:00<?, ?it/s]

|

| 14 |

3%|▎ | 1/30 [00:00<00:23, 1.22it/s]

|

| 15 |

7%|▋ | 2/30 [00:01<00:14, 1.93it/s]

|

| 16 |

10%|█ | 3/30 [00:01<00:12, 2.17it/s]

|

| 17 |

13%|█▎ | 4/30 [00:01<00:11, 2.30it/s]

|

| 18 |

17%|█▋ | 5/30 [00:02<00:10, 2.39it/s]

|

| 19 |

20%|██ | 6/30 [00:02<00:09, 2.44it/s]

|

| 20 |

23%|██▎ | 7/30 [00:03<00:09, 2.47it/s]

|

| 21 |

27%|██▋ | 8/30 [00:03<00:08, 2.49it/s]

|

| 22 |

30%|███ | 9/30 [00:03<00:08, 2.51it/s]

|

| 23 |

33%|███▎ | 10/30 [00:04<00:07, 2.52it/s]

|

| 24 |

37%|███▋ | 11/30 [00:04<00:07, 2.53it/s]

|

| 25 |

40%|████ | 12/30 [00:05<00:07, 2.53it/s]

|

| 26 |

43%|████▎ | 13/30 [00:05<00:06, 2.53it/s]

|

| 27 |

47%|████▋ | 14/30 [00:05<00:06, 2.54it/s]

|

| 28 |

50%|█████ | 15/30 [00:06<00:05, 2.54it/s]

|

| 29 |

53%|█████▎ | 16/30 [00:06<00:05, 2.54it/s]

|

| 30 |

57%|█████▋ | 17/30 [00:07<00:05, 2.54it/s]

|

| 31 |

60%|██████ | 18/30 [00:07<00:04, 2.54it/s]

|

| 32 |

63%|██████▎ | 19/30 [00:07<00:04, 2.54it/s]

|

| 33 |

67%|██████▋ | 20/30 [00:08<00:03, 2.54it/s]

|

| 34 |

70%|███████ | 21/30 [00:08<00:03, 2.54it/s]

|

| 35 |

73%|███████▎ | 22/30 [00:08<00:03, 2.54it/s]

|

| 36 |

77%|███████▋ | 23/30 [00:09<00:02, 2.53it/s]

|

| 37 |

80%|████████ | 24/30 [00:09<00:02, 2.54it/s]

|

| 38 |

83%|████████▎ | 25/30 [00:10<00:01, 2.53it/s]

|

| 39 |

87%|████████▋ | 26/30 [00:10<00:01, 2.53it/s]

|

| 40 |

90%|█████████ | 27/30 [00:10<00:01, 2.53it/s]

|

| 41 |

93%|█████████▎| 28/30 [00:11<00:00, 2.53it/s]

|

| 42 |

97%|█████████▋| 29/30 [00:11<00:00, 2.53it/s]

|

| 43 |

+

[2025-09-24 13:43:19.446][ INFO](root) - ---- DM/ControlNet Latent features generation time: 12.313125371932983 seconds ----

|

| 44 |

+

[2025-09-24 13:43:20.016][ INFO](root) - ---- Start decoding latent features into images... ----

|

| 45 |

+

|

| 46 |

0%| | 0/4 [00:00<?, ?it/s]

|

| 47 |

25%|██▌ | 1/4 [00:04<00:13, 4.36s/it]

|

| 48 |

50%|█████ | 2/4 [00:08<00:07, 4.00s/it]

|

| 49 |

75%|███████▌ | 3/4 [00:11<00:03, 3.79s/it]

|

| 50 |

+

[2025-09-24 13:43:35.252][ INFO](root) - ---- Image VAE decoding time: 15.23531699180603 seconds ----

|

| 51 |

+

2025-09-24 13:43:37,053 INFO image_writer.py:197 - writing: outputs/NoMAISI_DLCSD24_demo_512xy_256z_771p25m/DLCS_1419_seg_sh_image.nii.gz

|

| 52 |

+

2025-09-24 13:43:41,437 INFO image_writer.py:197 - writing: outputs/NoMAISI_DLCSD24_demo_512xy_256z_771p25m/DLCS_1419_seg_sh_label.nii.gz

|

scripts/__init__.py

ADDED

|

@@ -0,0 +1,10 @@

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

| 1 |

+

# Copyright (c) MONAI Consortium

|

| 2 |

+

# Licensed under the Apache License, Version 2.0 (the "License");

|

| 3 |

+

# You may not use this file except in compliance with the License.

|

| 4 |

+

# You may obtain a copy of the License at

|

| 5 |

+

# http://www.apache.org/licenses/LICENSE-2.0

|

| 6 |

+

# Unless required by applicable law or agreed to in writing, software

|

| 7 |

+

# distributed under the License is distributed on an "AS IS" BASIS,

|

| 8 |

+

# WITHOUT WARRANTIES OR CONDITIONS OF ANY KIND, either express or implied.

|

| 9 |

+

# See the License for the specific language governing permissions and

|

| 10 |

+

# limitations under the License.

|

scripts/__pycache__/__init__.cpython-310.pyc

ADDED

|

Binary file (140 Bytes). View file

|

|

|

scripts/__pycache__/augmentation.cpython-310.pyc

ADDED

|

Binary file (6.81 kB). View file

|

|

|

scripts/__pycache__/diff_model_create_training_data.cpython-310.pyc

ADDED

|

Binary file (7.38 kB). View file

|

|

|

scripts/__pycache__/diff_model_setting.cpython-310.pyc

ADDED

|

Binary file (2.47 kB). View file

|

|

|

scripts/__pycache__/find_masks.cpython-310.pyc

ADDED

|

Binary file (4.48 kB). View file

|

|

|

scripts/__pycache__/infer_controlnet.cpython-310.pyc

ADDED

|

Binary file (5.72 kB). View file

|

|

|

scripts/__pycache__/infer_testV2_controlnet.cpython-310.pyc

ADDED

|

Binary file (5.76 kB). View file

|

|

|

scripts/__pycache__/infer_test_controlnet.cpython-310.pyc

ADDED

|

Binary file (5.75 kB). View file

|

|

|

scripts/__pycache__/inference.cpython-310.pyc

ADDED

|

Binary file (7.62 kB). View file

|

|

|

scripts/__pycache__/quality_check.cpython-310.pyc

ADDED

|

Binary file (4.39 kB). View file

|

|

|

scripts/__pycache__/rectified_flow.cpython-310.pyc

ADDED

|

Binary file (10.9 kB). View file

|

|

|

scripts/__pycache__/sample.cpython-310.pyc

ADDED

|

Binary file (31.4 kB). View file

|

|

|

scripts/__pycache__/train_controlnet.cpython-310.pyc

ADDED

|

Binary file (8.01 kB). View file

|

|

|

scripts/__pycache__/utils.cpython-310.pyc

ADDED

|

Binary file (26.5 kB). View file

|

|

|

scripts/__pycache__/utils_plot.cpython-310.pyc

ADDED

|

Binary file (6.66 kB). View file

|

|

|

scripts/augmentation.py

ADDED

|

@@ -0,0 +1,373 @@

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

| 1 |

+

# Copyright (c) MONAI Consortium

|

| 2 |

+

# Licensed under the Apache License, Version 2.0 (the "License");

|

| 3 |

+

# you may not use this file except in compliance with the License.

|

| 4 |

+

# You may obtain a copy of the License at

|

| 5 |

+

# http://www.apache.org/licenses/LICENSE-2.0

|

| 6 |

+

# Unless required by applicable law or agreed to in writing, software

|

| 7 |

+

# distributed under the License is distributed on an "AS IS" BASIS,

|

| 8 |

+

# WITHOUT WARRANTIES OR CONDITIONS OF ANY KIND, either express or implied.

|

| 9 |

+

# See the License for the specific language governing permissions and

|

| 10 |

+

# limitations under the License.

|

| 11 |

+

|

| 12 |

+

import numpy as np

|

| 13 |

+

import torch

|

| 14 |

+

import torch.nn.functional as F

|

| 15 |

+

from monai.transforms import Rand3DElastic, RandAffine, RandZoom

|

| 16 |

+

from monai.utils import ensure_tuple_rep

|

| 17 |

+

|

| 18 |

+

|

| 19 |

+

def erode3d(input_tensor, erosion=3):

|

| 20 |

+

# Define the structuring element

|

| 21 |

+

erosion = ensure_tuple_rep(erosion, 3)

|

| 22 |

+

structuring_element = torch.ones(1, 1, erosion[0], erosion[1], erosion[2]).to(input_tensor.device)

|

| 23 |

+

|

| 24 |

+

# Pad the input tensor to handle border pixels

|

| 25 |

+

input_padded = F.pad(

|

| 26 |

+

input_tensor.float().unsqueeze(0).unsqueeze(0),

|

| 27 |

+

(erosion[0] // 2, erosion[0] // 2, erosion[1] // 2, erosion[1] // 2, erosion[2] // 2, erosion[2] // 2),

|

| 28 |

+

mode="constant",

|

| 29 |

+

value=1.0,

|

| 30 |

+

)

|

| 31 |

+

|

| 32 |

+

# Apply erosion operation

|

| 33 |

+

output = F.conv3d(input_padded, structuring_element, padding=0)

|

| 34 |

+

|

| 35 |

+

# Set output values based on the minimum value within the structuring element

|

| 36 |

+

output = torch.where(output == torch.sum(structuring_element), 1.0, 0.0)

|

| 37 |

+

|

| 38 |

+

return output.squeeze(0).squeeze(0)

|

| 39 |

+

|

| 40 |

+

|

| 41 |

+

def dilate3d(input_tensor, erosion=3):

|

| 42 |

+

# Define the structuring element

|

| 43 |

+

erosion = ensure_tuple_rep(erosion, 3)

|

| 44 |

+

structuring_element = torch.ones(1, 1, erosion[0], erosion[1], erosion[2]).to(input_tensor.device)

|

| 45 |

+

|

| 46 |

+

# Pad the input tensor to handle border pixels

|

| 47 |

+

input_padded = F.pad(

|

| 48 |

+

input_tensor.float().unsqueeze(0).unsqueeze(0),

|

| 49 |

+

(erosion[0] // 2, erosion[0] // 2, erosion[1] // 2, erosion[1] // 2, erosion[2] // 2, erosion[2] // 2),

|

| 50 |

+

mode="constant",

|

| 51 |

+

value=1.0,

|

| 52 |

+

)

|

| 53 |

+

|

| 54 |

+

# Apply erosion operation

|

| 55 |

+

output = F.conv3d(input_padded, structuring_element, padding=0)

|

| 56 |

+

|

| 57 |

+

# Set output values based on the minimum value within the structuring element

|

| 58 |

+

output = torch.where(output > 0, 1.0, 0.0)

|

| 59 |

+

|

| 60 |

+

return output.squeeze(0).squeeze(0)

|

| 61 |

+

|

| 62 |

+

|

| 63 |

+

def augmentation_tumor_bone(pt_nda, output_size, random_seed=None):

|

| 64 |

+

volume = pt_nda.squeeze(0)

|

| 65 |

+

real_l_volume_ = torch.zeros_like(volume)

|

| 66 |

+

real_l_volume_[volume == 128] = 1

|

| 67 |

+

real_l_volume_ = real_l_volume_.to(torch.uint8)

|

| 68 |

+

|

| 69 |

+

elastic = RandAffine(

|

| 70 |

+

mode="nearest",

|

| 71 |

+

prob=1.0,

|

| 72 |

+

translate_range=(5, 5, 0),

|

| 73 |

+

rotate_range=(0, 0, 0.1),

|

| 74 |

+

scale_range=(0.15, 0.15, 0),

|

| 75 |

+

padding_mode="zeros",

|

| 76 |

+

)

|

| 77 |

+

elastic.set_random_state(seed=random_seed)

|

| 78 |

+

|

| 79 |

+

tumor_szie = torch.sum((real_l_volume_ > 0).float())

|

| 80 |

+

###########################

|

| 81 |

+

# remove pred in pseudo_label in real lesion region

|

| 82 |

+

volume[real_l_volume_ > 0] = 200

|

| 83 |

+

###########################

|

| 84 |

+

if tumor_szie > 0:

|

| 85 |

+

# get organ mask

|

| 86 |

+

organ_mask = (

|

| 87 |

+

torch.logical_and(33 <= volume, volume <= 56).float()

|

| 88 |

+

+ torch.logical_and(63 <= volume, volume <= 97).float()

|

| 89 |

+

+ (volume == 127).float()

|

| 90 |

+

+ (volume == 114).float()

|

| 91 |

+

+ real_l_volume_

|

| 92 |

+

)

|

| 93 |

+

organ_mask = (organ_mask > 0).float()

|

| 94 |

+

cnt = 0

|

| 95 |

+

while True:

|

| 96 |

+

threshold = 0.8 if cnt < 40 else 0.75

|

| 97 |

+

real_l_volume = real_l_volume_

|

| 98 |

+

# random distor mask

|

| 99 |

+

distored_mask = elastic((real_l_volume > 0).cuda(), spatial_size=tuple(output_size)).as_tensor()

|

| 100 |

+

real_l_volume = distored_mask * organ_mask

|

| 101 |

+

cnt += 1

|

| 102 |

+

print(torch.sum(real_l_volume), "|", tumor_szie * threshold)

|

| 103 |

+

if torch.sum(real_l_volume) >= tumor_szie * threshold:

|

| 104 |

+

real_l_volume = dilate3d(real_l_volume.squeeze(0), erosion=5)

|

| 105 |

+

real_l_volume = erode3d(real_l_volume, erosion=5).unsqueeze(0).to(torch.uint8)

|

| 106 |

+

break

|

| 107 |

+

else:

|

| 108 |

+

real_l_volume = real_l_volume_

|

| 109 |

+

|

| 110 |

+

volume[real_l_volume == 1] = 128

|

| 111 |

+

|

| 112 |

+

pt_nda = volume.unsqueeze(0)

|

| 113 |

+

return pt_nda

|

| 114 |

+

|

| 115 |

+

|

| 116 |

+

def augmentation_tumor_liver(pt_nda, output_size, random_seed=None):

|

| 117 |

+

volume = pt_nda.squeeze(0)

|

| 118 |

+

real_l_volume_ = torch.zeros_like(volume)

|

| 119 |

+

real_l_volume_[volume == 1] = 1

|

| 120 |

+

real_l_volume_[volume == 26] = 2

|

| 121 |

+

real_l_volume_ = real_l_volume_.to(torch.uint8)

|

| 122 |

+

|

| 123 |

+

elastic = Rand3DElastic(

|

| 124 |

+

mode="nearest",

|

| 125 |

+

prob=1.0,

|

| 126 |

+

sigma_range=(5, 8),

|

| 127 |

+

magnitude_range=(100, 200),

|

| 128 |

+

translate_range=(10, 10, 10),

|

| 129 |

+

rotate_range=(np.pi / 36, np.pi / 36, np.pi / 36),

|

| 130 |

+

scale_range=(0.2, 0.2, 0.2),

|

| 131 |

+

padding_mode="zeros",

|

| 132 |

+

)

|

| 133 |

+

elastic.set_random_state(seed=random_seed)

|

| 134 |

+

|

| 135 |

+

tumor_szie = torch.sum(real_l_volume_ == 2)

|

| 136 |

+

###########################

|

| 137 |

+

# remove pred organ labels

|

| 138 |

+

volume[volume == 1] = 0

|

| 139 |

+

volume[volume == 26] = 0

|

| 140 |

+

# before move tumor maks, full the original location by organ labels

|

| 141 |

+

volume[real_l_volume_ == 1] = 1

|

| 142 |

+

volume[real_l_volume_ == 2] = 1

|

| 143 |

+

###########################

|

| 144 |

+

while True:

|

| 145 |

+

real_l_volume = real_l_volume_

|

| 146 |

+

# random distor mask

|

| 147 |

+

real_l_volume = elastic((real_l_volume == 2).cuda(), spatial_size=tuple(output_size)).as_tensor()

|

| 148 |

+

# get organ mask

|

| 149 |

+

organ_mask = (real_l_volume_ == 1).float() + (real_l_volume_ == 2).float()

|

| 150 |

+

|

| 151 |

+

organ_mask = dilate3d(organ_mask.squeeze(0), erosion=5)

|

| 152 |

+

organ_mask = erode3d(organ_mask, erosion=5).unsqueeze(0)

|

| 153 |

+

real_l_volume = real_l_volume * organ_mask

|

| 154 |

+

print(torch.sum(real_l_volume), "|", tumor_szie * 0.80)

|

| 155 |

+

if torch.sum(real_l_volume) >= tumor_szie * 0.80:

|

| 156 |

+

real_l_volume = dilate3d(real_l_volume.squeeze(0), erosion=5)

|

| 157 |

+

real_l_volume = erode3d(real_l_volume, erosion=5).unsqueeze(0)

|

| 158 |

+

break

|

| 159 |

+

|

| 160 |

+

volume[real_l_volume == 1] = 26

|

| 161 |

+

|

| 162 |

+

pt_nda = volume.unsqueeze(0)

|

| 163 |

+

return pt_nda

|

| 164 |

+

|

| 165 |

+

|

| 166 |

+

def augmentation_tumor_lung(pt_nda, output_size, random_seed=None):

|

| 167 |

+

volume = pt_nda.squeeze(0)

|

| 168 |

+

real_l_volume_ = torch.zeros_like(volume)

|

| 169 |

+

real_l_volume_[volume == 23] = 1

|

| 170 |

+

real_l_volume_ = real_l_volume_.to(torch.uint8)

|

| 171 |

+

|

| 172 |

+

elastic = Rand3DElastic(

|

| 173 |

+

mode="nearest",

|

| 174 |

+

prob=1.0,

|

| 175 |

+

sigma_range=(5, 8),

|

| 176 |

+

magnitude_range=(100, 200),

|

| 177 |

+

translate_range=(20, 20, 20),

|

| 178 |

+

rotate_range=(np.pi / 36, np.pi / 36, np.pi),

|

| 179 |

+

scale_range=(0.15, 0.15, 0.15),

|

| 180 |

+

padding_mode="zeros",

|

| 181 |

+

)

|

| 182 |

+

elastic.set_random_state(seed=random_seed)

|

| 183 |

+

|

| 184 |

+

tumor_szie = torch.sum(real_l_volume_)

|

| 185 |

+

# before move lung tumor maks, full the original location by lung labels

|

| 186 |

+

new_real_l_volume_ = dilate3d(real_l_volume_.squeeze(0), erosion=3)

|

| 187 |

+

new_real_l_volume_ = new_real_l_volume_.unsqueeze(0)

|

| 188 |

+

new_real_l_volume_[real_l_volume_ > 0] = 0

|

| 189 |

+

new_real_l_volume_[volume < 28] = 0

|

| 190 |

+

new_real_l_volume_[volume > 32] = 0

|

| 191 |

+

tmp = volume[(volume * new_real_l_volume_).nonzero(as_tuple=True)].view(-1)

|

| 192 |

+

|

| 193 |

+

mode = torch.mode(tmp, 0)[0].item()

|

| 194 |

+

print(mode)

|

| 195 |

+

assert 28 <= mode <= 32

|

| 196 |

+

volume[real_l_volume_.bool()] = mode

|

| 197 |

+

###########################

|

| 198 |

+

if tumor_szie > 0:

|

| 199 |

+

# aug

|

| 200 |

+

while True:

|

| 201 |

+

real_l_volume = real_l_volume_

|

| 202 |

+

# random distor mask

|

| 203 |

+

real_l_volume = elastic(real_l_volume, spatial_size=tuple(output_size)).as_tensor()

|

| 204 |

+

# get lung mask v2 (133 order)

|

| 205 |

+

lung_mask = (

|

| 206 |

+

(volume == 28).float()

|

| 207 |

+

+ (volume == 29).float()

|

| 208 |

+

+ (volume == 30).float()

|

| 209 |

+

+ (volume == 31).float()

|

| 210 |

+

+ (volume == 32).float()

|

| 211 |

+

)

|

| 212 |

+

|

| 213 |

+

lung_mask = dilate3d(lung_mask.squeeze(0), erosion=5)

|

| 214 |

+

lung_mask = erode3d(lung_mask, erosion=5).unsqueeze(0)

|

| 215 |

+

real_l_volume = real_l_volume * lung_mask

|

| 216 |

+

print(torch.sum(real_l_volume), "|", tumor_szie * 0.85)

|

| 217 |

+

if torch.sum(real_l_volume) >= tumor_szie * 0.85:

|

| 218 |

+

real_l_volume = dilate3d(real_l_volume.squeeze(0), erosion=5)

|

| 219 |

+

real_l_volume = erode3d(real_l_volume, erosion=5).unsqueeze(0).to(torch.uint8)

|

| 220 |

+

break

|

| 221 |

+

else:

|

| 222 |

+

real_l_volume = real_l_volume_

|

| 223 |

+

|

| 224 |

+

volume[real_l_volume == 1] = 23

|

| 225 |

+

|

| 226 |

+

pt_nda = volume.unsqueeze(0)

|

| 227 |

+

return pt_nda

|

| 228 |

+

|

| 229 |

+

|

| 230 |

+

def augmentation_tumor_pancreas(pt_nda, output_size, random_seed=None):

|

| 231 |

+

volume = pt_nda.squeeze(0)

|

| 232 |

+

real_l_volume_ = torch.zeros_like(volume)

|

| 233 |

+

real_l_volume_[volume == 4] = 1

|

| 234 |

+

real_l_volume_[volume == 24] = 2

|

| 235 |

+

real_l_volume_ = real_l_volume_.to(torch.uint8)

|

| 236 |

+

|

| 237 |

+

elastic = Rand3DElastic(

|

| 238 |

+

mode="nearest",

|

| 239 |

+

prob=1.0,

|

| 240 |

+

sigma_range=(5, 8),

|

| 241 |

+

magnitude_range=(100, 200),

|

| 242 |

+

translate_range=(15, 15, 15),

|

| 243 |

+

rotate_range=(np.pi / 36, np.pi / 36, np.pi / 36),

|

| 244 |

+

scale_range=(0.1, 0.1, 0.1),

|

| 245 |

+

padding_mode="zeros",

|

| 246 |

+

)

|

| 247 |

+

elastic.set_random_state(seed=random_seed)

|

| 248 |

+

|

| 249 |

+

tumor_szie = torch.sum(real_l_volume_ == 2)

|

| 250 |

+

###########################

|

| 251 |

+

# remove pred organ labels

|

| 252 |

+

volume[volume == 24] = 0

|

| 253 |

+

volume[volume == 4] = 0

|

| 254 |

+

# before move tumor maks, full the original location by organ labels

|

| 255 |

+

volume[real_l_volume_ == 1] = 4

|

| 256 |

+

volume[real_l_volume_ == 2] = 4

|

| 257 |

+

###########################

|

| 258 |

+

while True:

|

| 259 |

+

real_l_volume = real_l_volume_

|

| 260 |

+

# random distor mask

|

| 261 |

+

real_l_volume = elastic((real_l_volume == 2).cuda(), spatial_size=tuple(output_size)).as_tensor()

|

| 262 |

+

# get organ mask

|

| 263 |

+

organ_mask = (real_l_volume_ == 1).float() + (real_l_volume_ == 2).float()

|

| 264 |

+

|

| 265 |

+

organ_mask = dilate3d(organ_mask.squeeze(0), erosion=5)

|

| 266 |

+

organ_mask = erode3d(organ_mask, erosion=5).unsqueeze(0)

|

| 267 |

+

real_l_volume = real_l_volume * organ_mask

|

| 268 |

+

print(torch.sum(real_l_volume), "|", tumor_szie * 0.80)

|

| 269 |

+

if torch.sum(real_l_volume) >= tumor_szie * 0.80:

|

| 270 |

+

real_l_volume = dilate3d(real_l_volume.squeeze(0), erosion=5)

|

| 271 |

+

real_l_volume = erode3d(real_l_volume, erosion=5).unsqueeze(0)

|

| 272 |

+

break

|

| 273 |

+

|

| 274 |

+

volume[real_l_volume == 1] = 24

|

| 275 |

+

|

| 276 |

+

pt_nda = volume.unsqueeze(0)

|

| 277 |

+

return pt_nda

|

| 278 |

+

|

| 279 |

+

|

| 280 |

+

def augmentation_tumor_colon(pt_nda, output_size, random_seed=None):

|

| 281 |

+

volume = pt_nda.squeeze(0)

|

| 282 |

+

real_l_volume_ = torch.zeros_like(volume)

|

| 283 |

+

real_l_volume_[volume == 27] = 1

|

| 284 |

+

real_l_volume_ = real_l_volume_.to(torch.uint8)

|

| 285 |

+

|

| 286 |

+

elastic = Rand3DElastic(

|

| 287 |

+

mode="nearest",

|

| 288 |

+

prob=1.0,

|

| 289 |

+

sigma_range=(5, 8),

|

| 290 |

+

magnitude_range=(100, 200),

|

| 291 |

+

translate_range=(5, 5, 5),

|

| 292 |

+

rotate_range=(np.pi / 36, np.pi / 36, np.pi / 36),

|

| 293 |

+

scale_range=(0.1, 0.1, 0.1),

|

| 294 |

+

padding_mode="zeros",

|

| 295 |

+

)

|

| 296 |

+

elastic.set_random_state(seed=random_seed)

|

| 297 |

+

|

| 298 |

+

tumor_szie = torch.sum(real_l_volume_)

|

| 299 |

+

###########################

|

| 300 |

+

# before move tumor maks, full the original location by organ labels

|

| 301 |

+

volume[real_l_volume_.bool()] = 62

|

| 302 |

+

###########################

|

| 303 |

+

if tumor_szie > 0:

|

| 304 |

+

# get organ mask

|

| 305 |

+

organ_mask = (volume == 62).float()

|

| 306 |

+

organ_mask = dilate3d(organ_mask.squeeze(0), erosion=5)

|

| 307 |

+

organ_mask = erode3d(organ_mask, erosion=5).unsqueeze(0)

|

| 308 |

+

# cnt = 0

|

| 309 |

+

cnt = 0

|

| 310 |

+

while True:

|

| 311 |

+

threshold = 0.8

|

| 312 |

+

real_l_volume = real_l_volume_

|

| 313 |

+

if cnt < 20:

|

| 314 |

+

# random distor mask

|

| 315 |

+

distored_mask = elastic((real_l_volume == 1).cuda(), spatial_size=tuple(output_size)).as_tensor()

|

| 316 |

+

real_l_volume = distored_mask * organ_mask

|

| 317 |

+

elif 20 <= cnt < 40:

|

| 318 |

+

threshold = 0.75

|

| 319 |

+

else:

|

| 320 |

+

break

|

| 321 |

+

|

| 322 |

+

real_l_volume = real_l_volume * organ_mask

|

| 323 |

+

print(torch.sum(real_l_volume), "|", tumor_szie * threshold)

|

| 324 |

+

cnt += 1

|

| 325 |

+

if torch.sum(real_l_volume) >= tumor_szie * threshold:

|

| 326 |

+

real_l_volume = dilate3d(real_l_volume.squeeze(0), erosion=5)

|

| 327 |

+

real_l_volume = erode3d(real_l_volume, erosion=5).unsqueeze(0).to(torch.uint8)

|

| 328 |

+

break

|

| 329 |

+

else:

|

| 330 |

+

real_l_volume = real_l_volume_

|

| 331 |

+

# break

|

| 332 |

+

volume[real_l_volume == 1] = 27

|

| 333 |

+

|

| 334 |

+

pt_nda = volume.unsqueeze(0)

|

| 335 |

+

return pt_nda

|

| 336 |

+

|

| 337 |

+

|

| 338 |

+

def augmentation_body(pt_nda, random_seed=None):

|

| 339 |

+

volume = pt_nda.squeeze(0)

|

| 340 |

+

|

| 341 |

+

zoom = RandZoom(min_zoom=0.99, max_zoom=1.01, mode="nearest", align_corners=None, prob=1.0)

|

| 342 |

+

zoom.set_random_state(seed=random_seed)

|

| 343 |

+

|

| 344 |

+

volume = zoom(volume)

|

| 345 |

+

|

| 346 |

+

pt_nda = volume.unsqueeze(0)

|

| 347 |

+

return pt_nda

|

| 348 |

+

|

| 349 |

+

|

| 350 |

+

def augmentation(pt_nda, output_size, random_seed=None):

|

| 351 |

+

label_list = torch.unique(pt_nda)

|

| 352 |

+

label_list = list(label_list.cpu().numpy())

|

| 353 |

+

|

| 354 |

+

if 128 in label_list:

|

| 355 |

+

print("augmenting bone lesion/tumor")

|

| 356 |

+

pt_nda = augmentation_tumor_bone(pt_nda, output_size, random_seed)

|

| 357 |

+

elif 26 in label_list:

|

| 358 |

+

print("augmenting liver tumor")

|

| 359 |

+

pt_nda = augmentation_tumor_liver(pt_nda, output_size, random_seed)

|

| 360 |

+

elif 23 in label_list:

|

| 361 |

+

print("augmenting lung tumor")

|

| 362 |

+

pt_nda = augmentation_tumor_lung(pt_nda, output_size, random_seed)

|

| 363 |

+

elif 24 in label_list:

|

| 364 |

+

print("augmenting pancreas tumor")

|

| 365 |

+

pt_nda = augmentation_tumor_pancreas(pt_nda, output_size, random_seed)

|

| 366 |

+

elif 27 in label_list:

|

| 367 |

+

print("augmenting colon tumor")

|

| 368 |

+

pt_nda = augmentation_tumor_colon(pt_nda, output_size, random_seed)

|

| 369 |

+

else:

|

| 370 |

+

print("augmenting body")

|

| 371 |

+

pt_nda = augmentation_body(pt_nda, random_seed)

|

| 372 |

+

|

| 373 |

+

return pt_nda

|

scripts/compute_fid_2-5d_ct.py

ADDED

|

@@ -0,0 +1,747 @@

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|